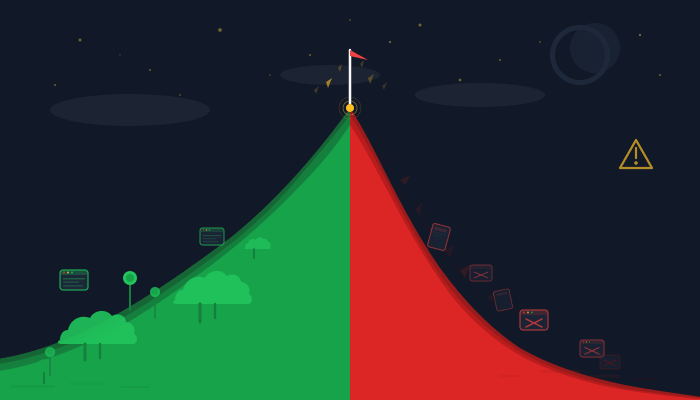

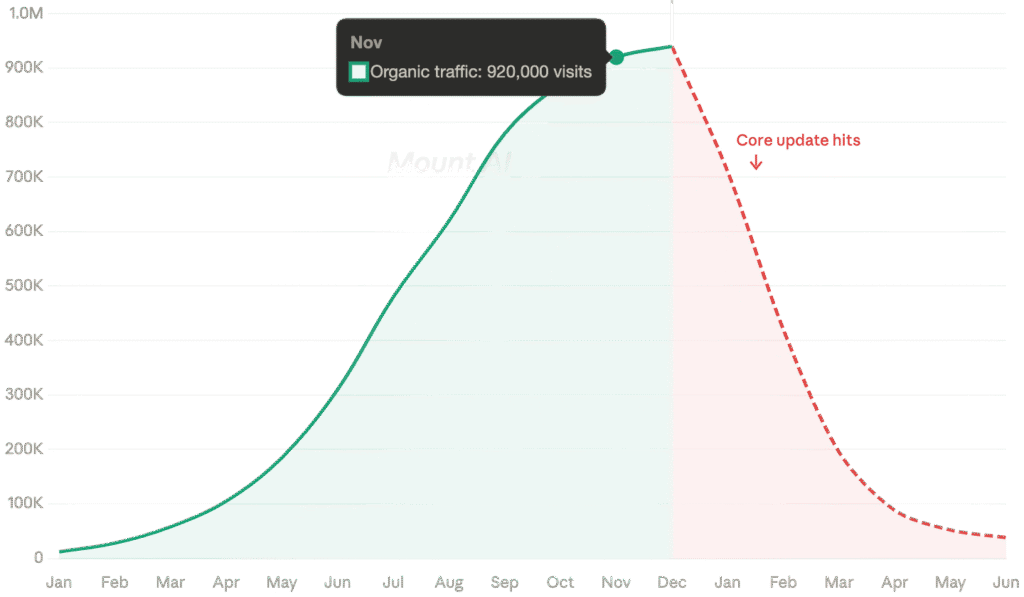

There’s a new cautionary shape showing up in Google Analytics dashboards across the SEO world. It looks like a mountain, sharp ascent on the left, cliff-face drop on the right, and practitioners have started calling it “Mount AI.”

The pattern is simple. A website goes all-in on AI-generated content. Traffic climbs. Sometimes dramatically. Then a Google core update rolls through, and the line doesn’t just flatten. It plummets.

If you’ve been watching SEO Twitter (or whatever we’re calling it now), you’ve probably seen the screenshots. But the interesting part isn’t the anecdotal horror stories. It’s the data behind them.

Table of Contents

The Numbers Behind the Crash

Malte Landwehr, CMO of Peec AI and former VP of SEO at Idealo, ran an analysis of the case studies and reference customers of a popular AI content tool. Not random users, but the flagship clients, the ones the vendor hand-picked for success stories. The results should make any content marketer pause:

- 57% experienced significant traffic declines

- 22% displayed the classic Mount AI shape, a steep rise followed by an equally steep collapse

The remaining sites with traffic losses didn’t even get the mountain. They skipped the peak entirely and went straight downhill.

Landwehr deliberately chose not to name the tool, noting he didn’t consider it the worst offender in the market. His colleague Tomek Rudzki ran a complementary analysis at Peec AI and found similar patterns across other platforms, with one tool showing 75% of its featured clients suffering significant visibility losses.

These are the highlight reels. The clients these companies chose to showcase. If the best-case portfolio looks like this, imagine what the B-sides sound like.

The pattern is accelerating too. Each successive Google core update seems to get better at identifying and devaluing content that was scaled without meaningful human oversight. What worked in early 2024 stopped working by mid-2024, and what survived mid-2024 is getting caught in 2025.

Why This Happens (And Why It’s Not Really About AI)

Here’s where most of the discourse gets it wrong. The Mount AI crash isn’t a punishment for using AI. It’s a punishment for using AI as a replacement for editorial judgment rather than a supplement to it.

Google has been pretty explicit about this. Their guidelines don’t penalize AI-generated content. They penalize content that exists primarily to manipulate search rankings, regardless of how it was produced. AI just made it absurdly easy to produce that kind of content at scale.

Think of it this way. Before Large Language Models (LLMs), producing 500 thin, keyword-targeted articles required an actual content farm with writers, editors, and a production pipeline. The friction was a natural quality filter. Not a great one, but a filter nonetheless.

AI removed that friction entirely. You can now produce 500 articles in an afternoon. And when you can do something that easily, the temptation to skip the parts that actually matter, original research, expert perspectives, editorial review, genuine usefulness, becomes almost irresistible.

The sites hitting Mount AI didn’t fail because they used AI. They failed because they treated content production like a volume game and forgot that Google’s entire business model depends on surfacing content that people actually want to read.

The Double Visibility Penalty Nobody’s Talking About

Here’s where it gets worse, and where the 2025 version of this problem diverges from anything we’ve seen before.

When your site loses organic rankings, you don’t just lose Google traffic. You lose visibility in AI-generated answers too.

ChatGPT, Perplexity, Google’s AI Overviews, Gemini, all of these systems use organic search signals as part of how they decide which sources to cite. Organic search is still the discovery layer that AI systems rely on for grounding their responses.

So when a core update tanks your rankings, you’re not just losing one traffic channel. You’re losing presence across two discovery ecosystems simultaneously. And the second loss compounds the first, because reduced AI citations mean fewer brand impressions, fewer backlinks from people who discover you through AI answers, and less of the authority signals that help you recover in organic search.

Eighteen months ago, this compounding effect didn’t exist. Now it’s the default consequence of a ranking collapse. The recovery curve just got significantly longer and steeper.

What Separates the Survivors from the Casualties

Not every site using AI content is crashing. Some are doing fine. A few are thriving. The difference isn’t whether they use AI. It’s how.

The survivors tend to share a few characteristics:

- Editorial strategy precedes production. They decide what to write based on genuine content gaps, audience needs, and competitive positioning, not just keyword volume. AI accelerates the execution, but a human decides what’s worth executing.

- Expert differentiation is baked in. The content contains perspectives, data, or insights that couldn’t have been generated by prompting an LLM with a keyword. Proprietary research, practitioner experience, contrarian analysis, something that makes the piece irreplaceable rather than interchangeable.

- Link authority is earned, not assumed. The best AI-assisted content still attracts backlinks because it’s genuinely useful or insightful. The worst AI content sits in an authority vacuum because nobody has a reason to link to the 47th generic explanation of a topic that already has 46 identical versions.

- Human review is non-negotiable. Every piece gets edited by someone who understands the subject, not just someone who can check grammar. The editorial pass isn’t cosmetic. It’s structural.

The line between AI content that sustains rankings and AI content that crashes isn’t about volume. It’s about whether the pipeline includes the things that make content worth ranking in the first place.

How to Check If You’re Climbing Mount AI Right Now

If you’ve been scaling AI content, the worst thing you can do is wait for a core update to find out whether your approach is sustainable. Here’s how to run the diagnostic before Google runs it for you.

- Track your ranking volatility around core updates. If your positions swing dramatically every time Google pushes an update, that’s an early warning. Stable sites tend to stay stable. Volatile sites tend to eventually crash.

- Audit your content for editorial fingerprints. Pick 20 random articles from your AI-produced inventory. Can you identify a unique angle, original data point, or expert insight in each one? If most of them read like competent but generic overviews, you have a Mount AI vulnerability.

- Check your backlink distribution. Are your AI-generated pages attracting links organically? Or is your backlink profile concentrated entirely on your older, human-produced content? Pages with no inbound authority are the first to go when algorithms tighten.

- Monitor your AI search visibility. Are you being cited in ChatGPT, Perplexity, or Google AI Overviews? If your organic presence is strong but your AI visibility is weak (or declining), you may already be in the early stages of the compounding penalty.

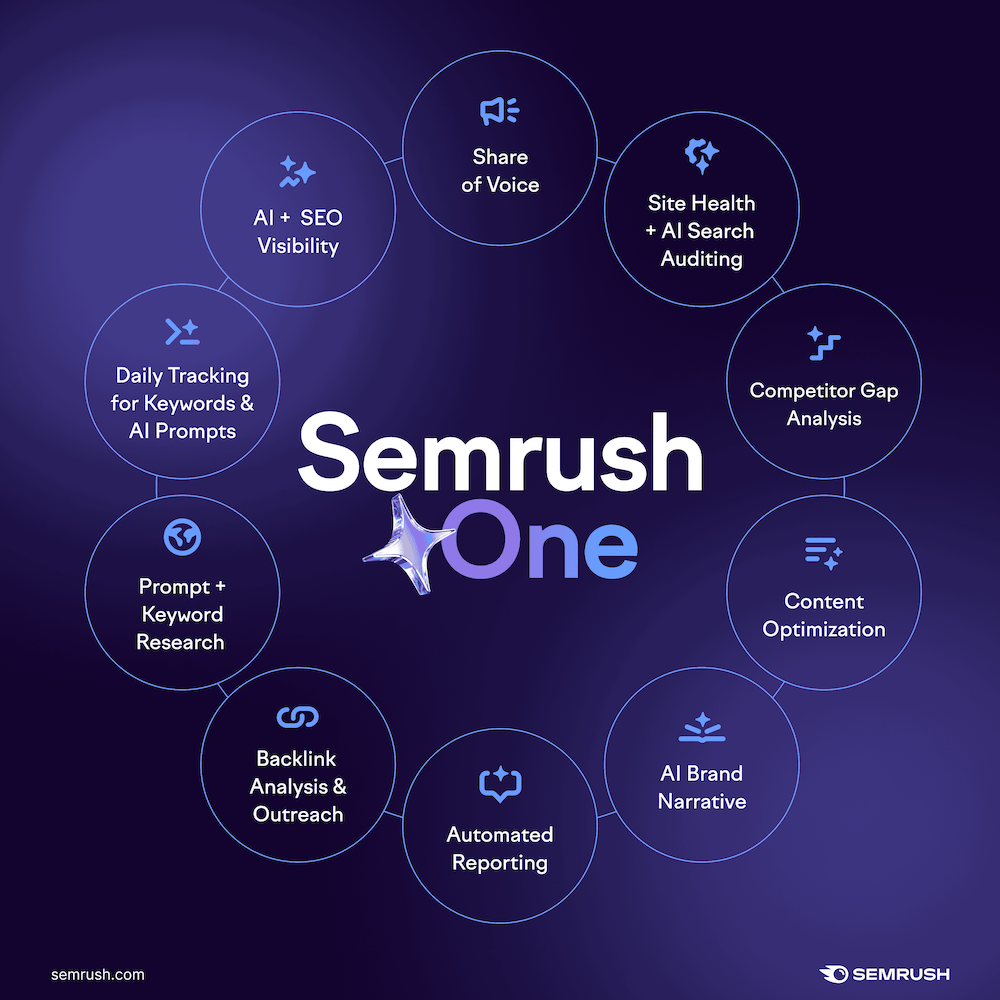

For the diagnostics, you’ll need a tool that covers both traditional SEO metrics and AI visibility tracking. Semrush One is worth looking at here, primarily because it bundles the standard SEO toolkit (site audit, position tracking, backlink analytics) with their newer AI Visibility reports, which track brand citations across ChatGPT, Perplexity, Gemini, and Google’s AI surfaces.

It’s one of the few platforms where you can see organic ranking health and AI citation presence in the same dashboard, which matters when you’re trying to catch the double visibility penalty before it compounds.

Full disclosure: The link above is an affiliate link. If you sign up through it, I earn a commission at no extra cost to you. I only recommend tools I’d use myself.

The Bigger Picture

Mount AI is, in many ways, a replay of every SEO shortcut cycle we’ve seen before. Keyword stuffing had its moment. Link farms had theirs. Private Blog Networks (PBNs) had a good run. Each one exploited a temporary gap between what was possible and what Google could detect, and each one eventually collapsed when the detection caught up.

AI content at scale is the same pattern with better technology. The gap between production capability and algorithmic detection was wider than usual, which made the initial returns more dramatic. But the gap is closing, and it’s closing faster than most practitioners expected.

The websites that will still be standing a year from now aren’t the ones that produced the most content. They’re the ones that used AI to produce better content, faster, without sacrificing the editorial judgment, subject expertise, and genuine usefulness that make content worth ranking.

The mountain only has one path down. Best not to climb it in the first place.

Related Articles